2026 Top 3D Vision Systems for Robots What You Need to Know?

In the evolving landscape of robotics, 3D vision systems for robots play a crucial role. These systems provide machines with the ability to perceive depth and spatial relationships, enhancing their interaction with the environment. With cutting-edge technology, they enable robots to execute complex tasks.

Many industries now rely on these advanced systems. From manufacturing to agriculture, robots equipped with 3D vision are transforming operations. However, implementing these technologies is not always straightforward. Challenges with calibration and environmental factors can hinder performance.

As we explore the top 3D vision systems for robots in 2026, it is essential to address both their potentials and limitations. Understanding how these systems function can lead to improvements. Continuous reflection on their capabilities will allow us to harness their full potential in the future.

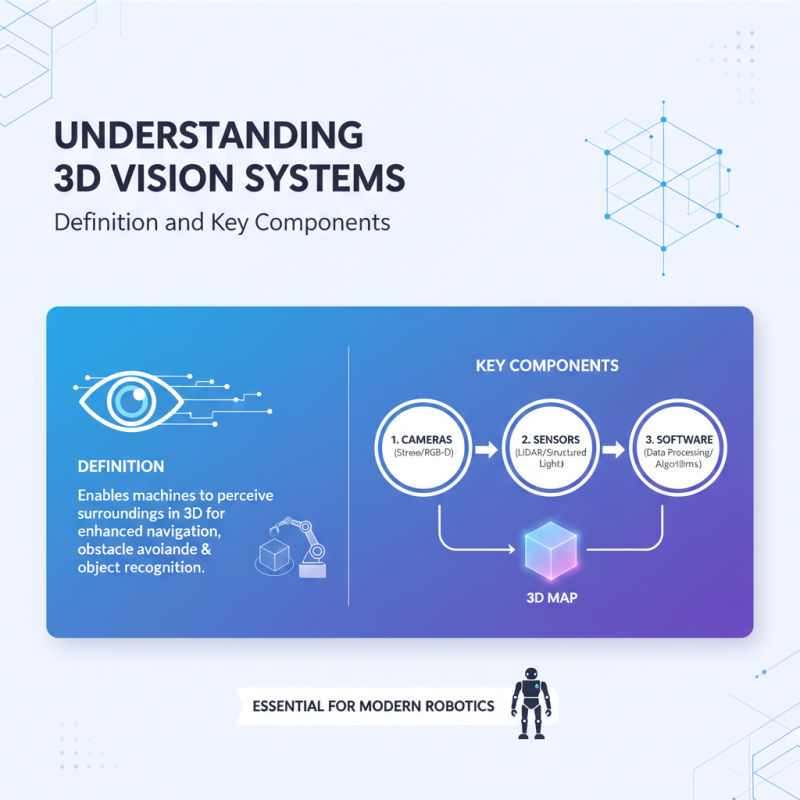

Understanding 3D Vision Systems: Definition and Key Components

3D vision systems are essential for modern robotics. They allow machines to perceive their surroundings in three dimensions. This technology aids in navigation, obstacle avoidance, and object recognition. A 3D vision system typically comprises cameras, sensors, and software for data processing.

Cameras capture images from different angles. This multi-angle data allows software to reconstruct a 3D map. Sensors, such as LiDAR, enhance depth perception. However, these systems can struggle with low-light conditions or reflective surfaces. Not all environments allow for accurate depth measurement, leading to potential errors.

Moreover, the integration of 3D vision into a robot is complex. It requires precise calibration. Misalignment can result in misinterpretation. Developers must continuously refine these systems to improve accuracy. The technology is promising but not without its challenges.

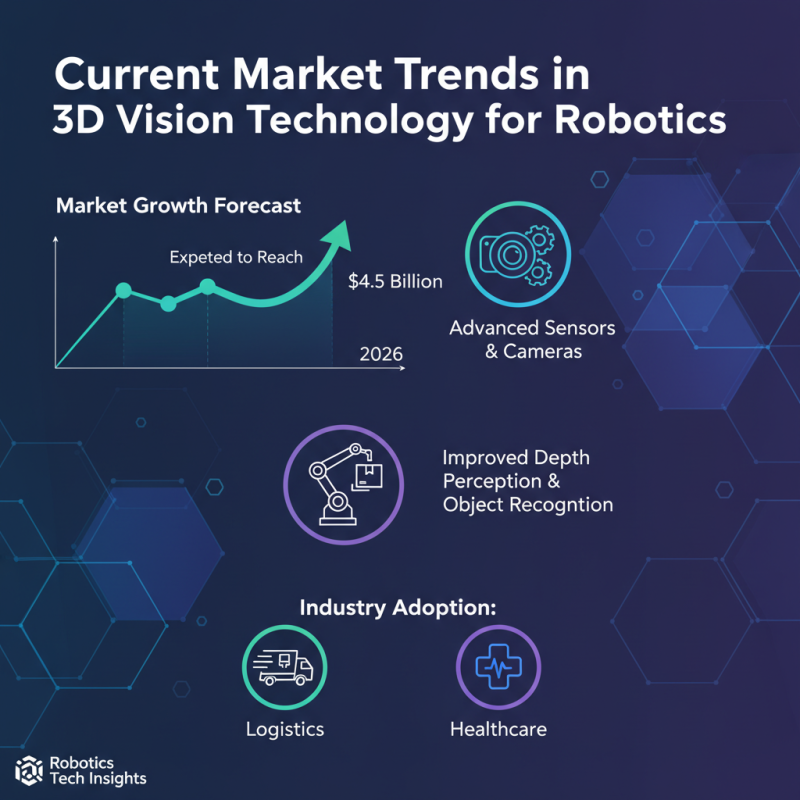

Current Market Trends in 3D Vision Technology for Robotics

The current landscape of 3D vision technology for robotics shows notable growth. A recent report indicates that the market is expected to reach $4.5 billion by 2026. Manufacturers are adopting advanced sensors and cameras that offer improved depth perception and object recognition. This enhances robotic performance across various industries, including logistics and healthcare.

Despite this positive outlook, challenges persist. Many companies struggle with integrating these systems into existing workflows. This can result in inefficiencies. Additionally, some 3D vision systems have limitations in low-light conditions. Such drawbacks can hinder task execution in certain environments. A survey revealed that 35% of organizations report difficulties in implementation.

Moreover, the demand for skilled professionals in this field continues to outstrip supply. Companies face barriers in hiring experts who can manage and optimize these technologies. This gap poses a significant risk to maximized productivity. As the market evolves, businesses need to address these issues proactively for sustainable growth.

Top 3D Vision Systems: Features and Capabilities Comparison

In 2026, the landscape of 3D vision systems for robots is evolving rapidly. Various features play a significant role in enhancing robotic applications. Systems now leverage various depth sensors, ranging from stereo cameras to LIDAR technologies. Each system offers unique capabilities, impacting its suitability for specific tasks. Recent industry reports indicate that over 44% of warehouses adopted advanced vision systems in their automated processes.

Understanding these features is crucial. Some systems excel in real-time processing, making them ideal for dynamic environments. Others focus on precision, essential for intricate assembly tasks. However, performance may vary, sometimes leading to inconsistent outcomes. Selecting a system requires careful consideration of the intended robotic application.

Tips: When evaluating 3D vision systems, prioritize your specific needs. Consider processing speed versus accuracy. Test how systems perform in different lighting conditions. Be aware that no system is flawless; each has its limitations. Gather feedback from industry peers to better understand their experiences with various solutions.

2026 Top 3D Vision Systems for Robots: Features and Capabilities Comparison

| System Model | Depth Accuracy | Field of View | Operating Range | Image Processing Speed | Connectivity Options |

|---|---|---|---|---|---|

| Model A | ±1 mm | 120° | 0.5 - 10 m | 30 fps | Ethernet, USB |

| Model B | ±0.5 mm | 90° | 0.3 - 5 m | 60 fps | Wi-Fi, Bluetooth |

| Model C | ±2 mm | 110° | 1 - 8 m | 25 fps | RS-232, Ethernet |

Industry Applications of 3D Vision Systems in Robotics

3D vision systems are transforming robotics across various industries. Agriculture, manufacturing, and logistics are key areas where these technologies shine. In agriculture, robots equipped with 3D vision identify ripe fruits. They can navigate complex environments like orchards with ease. However, challenges remain. Robots sometimes misidentify produce, leading to errors.

In manufacturing, 3D vision aids in quality control and assembly processes. Machines assess parts for defects, ensuring high standards. They can work faster than humans. Yet, the cost of implementation can be high. Not all companies can afford these systems. This poses a question about accessibility in the industry.

Logistics also benefits significantly. Robots scan and sort packages with precision. They navigate warehouses efficiently, reducing human labor. However, technical failures can disrupt operations. These systems require constant updates and maintenance. Both potential and pitfalls exist in integrating 3D vision into robotics. We must consider these aspects carefully as we move forward.

Future Predictions: The Evolution of 3D Vision in Robotic Solutions

The future of 3D vision systems in robotics is ripe with potential. As technology advances, we can expect more intricate designs and enhanced processing capabilities. Robots will gain the ability to perceive depth and environment more accurately. This change will lead to improved navigation and object recognition.

However, challenges remain. Current 3D vision systems struggle in low-light conditions. Algorithms may misinterpret data during complex tasks. Developers must refine these technologies and continually test their limitations. Real-world applications often reveal flaws that theoretical models overlook.

As we envision 3D vision's evolution, we must focus on real-world usability. Understanding environments will become crucial. Robotics must adapt to various settings, from factories to homes. Anticipating human interaction is essential. Balancing sophistication with practicality will define future achievements in robotics. Embracing imperfections may lead to more innovative solutions.

2026 Top 3D Vision Systems for Robots

Related Posts

-

Top 10 Amazing Innovations in Robot Vision Technology You Need to Know

-

Revolutionizing Industries: How Robot Vision Systems Enhance Automation and Efficiency

-

2025 How to Enhance Robotic Performance with 3D Vision Systems in Automation

-

How to Choose the Best Robot Vision System for Your Business Needs

-

How to Use Robotic Vision for Enhanced Automation and Efficiency

-

What is Robotic Vision? Understanding Its Importance and Applications in Automation